Technology is giving companies superpowers to compete more intelligently and capture the data behind changing trends, expanding markets, and new opportunities.

Hilary Mason

As individual technologies mature, there is a natural tendency to blend these disparate solutions into a harmonized and holistic new innovation. This is the case with several important stand alone technologies today commonly used in municipal governments and corporations today. It is an accretive outcome (1+1=3). The sum is worth more than the parts. We are now seeing the blending of:

- IoT Networks

- Artificial Intelligence

- Smart Sensors

- Edge Computing

- Vision Systems

- Big Data

- Robotics and Automation

- Security

By adding these stand alone concoctions together and stirring them in a proverbial pot, we can cook up some very powerful new innovations. Having data is no longer enough, we must make sense of the data and figure out how to apply it. Data needs to be monetized and deliver value to the owner.

Municipalities and businesses are happy to have more data about their operations, customers, and the results of strategy implementation. The only problem is that, once they have it, they may not know exactly what to do with all that information.

Not knowing the best way to read, understand, and apply data can actually be costing your city or company. Those costs could take the form of lost revenue opportunities, lower efficiency and productivity, quality issues, and more. For example, Forrester reports that between 60 percent and 73 percent of all data within an enterprise goes unused for analytics. And that is despite the fact that more users are talking about big data, using technology to capture more data, and acknowledging the value of this information.

To compound matters, FMI Corp. has created a whitepaper on the impact of big data on the construction industry. In this whitepaper, FMI breaks down some of the most challenging aspects of big data usage, explain the opportunities that present themselves when big data and analytics are properly implemented, and shows the long-term power of utilizing big data as a business tool. Some key findings include:

- Today we produce more data than ever: 2.5 quintillion bytes of data daily.

- 95.5% of all data captured goes unused in the Engineering and Construction (E&C) industry.

- 13% of construction teams’ working hours are spent looking for project data and information.

- 30% of E&C companies are using applications that don’t integrate with one another.

If this is the case in the wealthy E&C industry, what is the picture like in enterprise and municipal governments where COVID is seriously impacting cash flows today?

While enjoying a dinner with an elite business colleague of mine five years ago, we discussed many advanced data topics. I remarked that if I was 20-years old again and just starting out on my career that I would wish to be a Data Scientist – just like her. The title sounded so cool to me.

However, her reply surprised me. She said that being a Data Scientist was not at all as romantic of a career as I thought. That the secret to be a great Data Scientist was patience, an inquiring mind, persistence, and a lot more patience. Curious, I asked why? She told me that 85% of her work time was spent cleansing dirty data. Getting it ready to be processed and analyzed. Only about 5% of her time was actually running the data through investigative processes, and the remaining 10% of her day, was trying to make sense of the data that resulted from the process run. I told her that I loved puzzles and challenges and that I was still not discouraged. While we did not know each other very well, she already knew that I did not have the span of attention for the work. It takes a special person to be a great Data Scientist.

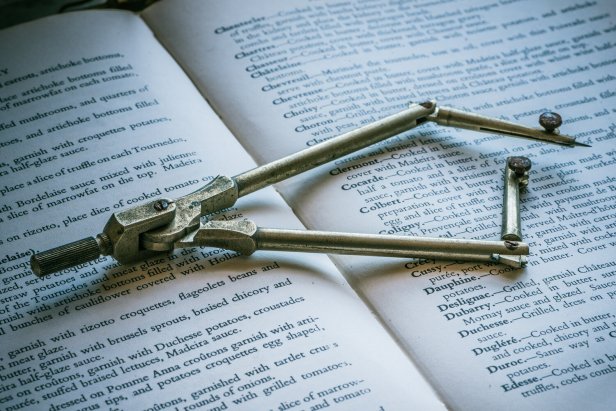

Now, just five short years later, we are seeing big data merged with cloud computing that powers artificial intelligence and enhanced analytic engines. The manual processes are largely behind us as we use automation and tools to cleanse the data and optimize it for the process runs. Once completed, we use AI to gain insights, search for patterns, trends, and anomalies within the data. We use AI to meticulously sift through the data, like a master chef strains the flour before baking the bread. I use this baking analogy because it closely parallels the AI process and needs. Sifting is a process that breaks up any lumps in the flour and aerates it at the same time by pushing it through a gadget that is essentially a cup with a fine strainer at one end. In preparation, the AI platform will deconstruct and then reconstruct the data in a similar manner to prepare it for the analysis run. Then, it will run it, and finally, AI will review it. By using AI to cook the data, we achieve superior results with greater consistency and clearer understanding of the data, which is far better and faster compared to the older manual process steps.

As an example, making sense of vast streams of big data is getting easier, thanks to an artificial intelligence tool developed at Los Alamos National Laboratory. SmartTensors sifts through millions of millions of bytes of diverse data to find the hidden features that matter, with significant implications from health care to national security, climate modeling to text mining, and many other fields.

“SmartTensors analyzes terabytes of diverse data to find the hidden patterns and features that make the data understandable and reveal its underlying processes or causes,” said Boian Alexandrov, a scientist at Los Alamos National Laboratory, AI expert, and principal investigator on the project. “What our AI software does best is extracting the latent variables, or features, from the data that describe the whole data and mechanisms buried in it without any preconceived hypothesis.”

SmartTensors also can identify the optimal number of features needed to make sense of enormous, multidimensional datasets.

“Finding the optimal number of features is a way to reduce the dimensions in the data while being sure you’re not leaving out significant parts that lead to understanding the underlying processes shaping the whole the dataset,” said Velimir (“Monty”) Vesselinov, an expert in machine learning, data analytics, and model diagnostics at Los Alamos and also a principal investigator.

Smart Cities largely leverage smart CCTV cameras to keep the city safe by employing powerful AI-based solutions applied to the CCTV feeds to automatically detect various kinds of suspicious and/or criminal activities anywhere in the city. Any instances of criminal activity such as assault, brandishing of weapon, fire, vandalism, and/or suspicious behaviour will immediately be detected by the AI platform linking to the CCTVs, at which point an alert is created and assigned accordingly to law enforcement officials.

For forensic actions too, the authorities will be able to track the movement of criminals by first scanning through thousands of CCTVs based on various known behavioural, clothing attributes and/or facial recognition when possible to very quickly piece out the suspected criminal’s journey during the last several days. In the case of road safety, authorities can identify cars and people involved in hit-and-run cases with advanced CCTV capabilities such as number plate recognition and vehicle identification analytics based on colour, make, model, etc.

What determines the ‘smartness’ of a city is the use of technology to improve the livability of its citizens. Public safety is an important determinant of livability. None of us would want to live in a city where crime is rampant or where there is an imminent threat to life. AI-powered CCTV cameras with real-time analytics have an essential role to play in this regard. Through 24X7 surveillance and facial recognition technology, city administrators can index and monitor people with criminal backgrounds.

Video analytics today has evolved to such as extent so as to capture any signs of criminal activity – be it a gunshot or a melee in a public space to alert the concerned law enforcement authorities and reduce the response time for action.

IoT: When AI meets the Internet of Things

The Internet of Things (IoT) is a technology helping us to re-imagine daily life, but artificial intelligence (AI) is the real driving force behind the IoT’s full potential.

From its most basic applications of tracking our fitness levels, to its wide-reaching potential across industries and urban planning, the growing partnership between AI and the IoT means that a smarter future could occur sooner than we think.

IoT devices use the internet to communicate, collect, and exchange information about our online activities. Every day, they generate 1 billion GB of data.

By 2025, there’s projected to be between 40 to 50 billion IoT-connected devices globally. It is only natural that as these device numbers grow, the swaths of data will too. That is where AI steps in – lending its learning capabilities to the connectivity of the IoT.

By blending these technologies, we can create the strongest superpowers. Using these superpowers, we can have greater situational awareness that drives better and faster decision making.

Up, up, and away.

————————–MJM ————————–

References:

Barrett, J. (2021). Up to 73 Percent of Company Data Goes Unused for Analytics. Here’s How to Put It to Work: Targeting the right areas and using the right technology can save your bottom line. INC., Mansueto Ventures. Retrieved on April 7, 2021 from, https://www.inc.com/jeff-barrett/misusing-data-could-be-costing-your-business-heres-how.html

Ghosh, I. (2021). 4 key areas where AI and IoT are being combined. World Economic Forum. Retrieved on April 7, 2021 from, https://www.weforum.org/agenda/2021/03/ai-is-fusing-with-the-internet-of-things-to-create-new-technology-innovations/

Harrison, P. J. (2021). Graymatics on How the Use of AI in the Smart Cities Scheme Can Help Create a Safer Society. The Fintech Times, Disrupts Media Limited. Retrieved on April 7, 2021 from, https://thefintechtimes.com/graymatics-on-how-the-use-of-ai-in-the-smart-cities-scheme-can-help-create-a-safer-society/

Unknown. (2018). Study: 95% of All Data Captured Goes Unused in the Construction and Engineering Industry. FMI Corp. Retrieved on April 7, 2021 from, https://www.forconstructionpros.com/business/press-release/21031884/fmi-corp-study-95-of-all-data-captured-goes-unused-in-the-ec-industry

Unknown. (2021). New AI tool makes vast data streams intelligible and explainable. Los Alamos National Laboratory. Retrieved on April 7, 2021 from, https://www.newswise.com/articles/new-ai-tool-makes-vast-data-streams-intelligible-and-explainable

————————–MJM ————————–

About the Author:

Michael Martin is the Vice President of Technology with Metercor Inc., a Smart Meter, IoT, and Smart City systems integrator based in Canada. He has more than 35 years of experience in systems design for applications that use broadband networks, optical fibre, wireless, and digital communications technologies. He is a business and technology consultant. He was senior executive consultant for 15 years with IBM, where he has worked in the GBS Global Center of Competency for Energy and Utilities and the GTS Global Center of Excellence for Energy and Utilities. He is a founding partner and President of MICAN Communications and before that was President of Comlink Systems Limited and Ensat Broadcast Services, Inc., both divisions of Cygnal Technologies Corporation (CYN: TSX). Martin currently serves on the Board of Directors for TeraGo Inc (TGO: TSX) and previously served on the Board of Directors for Avante Logixx Inc. (XX: TSX.V). He has served as a Member, SCC ISO-IEC JTC 1/SC-41 – Internet of Things and related technologies, ISO – International Organization for Standardization, and as a member of the NIST SP 500-325 Fog Computing Conceptual Model, National Institute of Standards and Technology. He served on the Board of Governors of the University of Ontario Institute of Technology (UOIT) [now OntarioTech University] and on the Board of Advisers of five different Colleges in Ontario. For 16 years he served on the Board of the Society of Motion Picture and Television Engineers (SMPTE), Toronto Section. He holds three master’s degrees, in business (MBA), communication (MA), and education (MEd). As well, he has three undergraduate diplomas and five certifications in business, computer programming, internetworking, project management, media, photography, and communication technology. He has earned 20 badges in next generation MOOC continuous education in IoT, Cloud, AI and Cognitive systems, Blockchain, Agile, Big Data, Design Thinking, Security, and more.